VLAs are dead, long live World Action Models - a summary of Jim Fan's Robotics End Game talk

Jim Fan argues robotics will follow the exact LLM playbook - and VLAs are already being replaced by World Action Models.

by YK SugiOur generation was born too late to explore the Earth and too early to explore the stars, but we are born just in time to solve robotics.

That's how Jim Fan closed his talk at Sequoia's AI Ascent 2026 - and it might be the most concise summary of where robotics stands right now.

Fan leads Nvidia's embodied autonomous research group (essentially Nvidia Robotics). At last year's AI Ascent he was a standout presenter. This year, he came back with a bolder claim: robotics is entering its end game, and the playbook is already written - because it's the same one that LLMs already followed.

Here's a breakdown of his 20-minute talk.

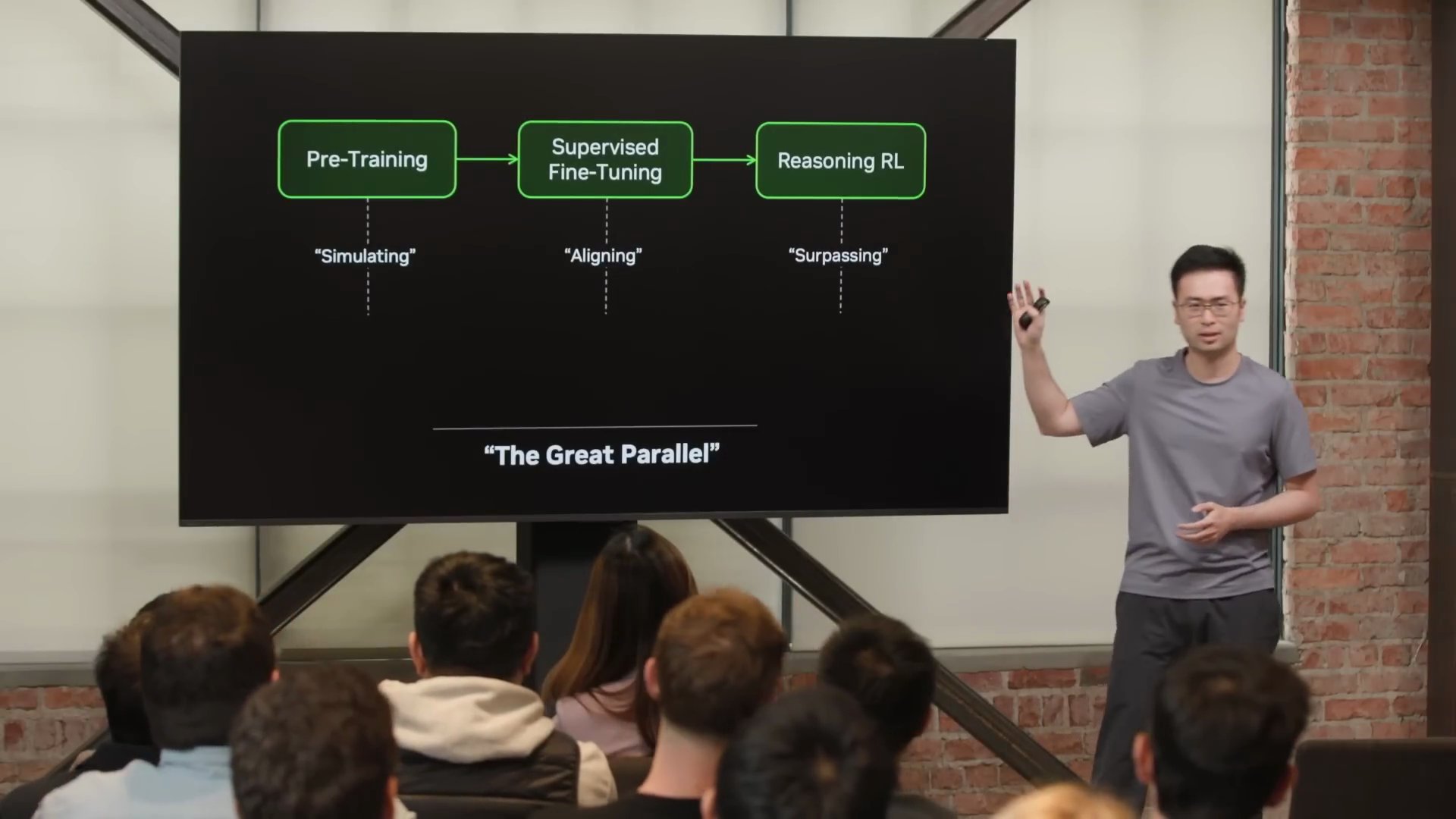

The Great Parallel

Fan's core thesis is what he calls "the great parallel" - the idea that robotics will follow the exact same trajectory as large language models. He frames it bluntly: "as any self-respecting scientist would do, I copy homework and I give it a new name."

The LLM path went through four stages in six years:

- Pre-training (GPT-3) - learning the shape of language through next-token prediction

- Supervised fine-tuning (InstructGPT) - aligning the model to do useful work

- Reasoning (o1) - using reinforcement learning to surpass imitation learning

- Auto research - accelerating the loop beyond what's humanly possible

The robotics parallel: instead of simulating strings, simulate the next physical world state. Align through action fine-tuning onto the slice of that simulation that matters for real robots. Then let RL carry the last mile.

Why VLAs fall short

Vision-language-action models (VLAs) have dominated robotics for the past three years. Models like pi0 and Nvidia's own GR00T fall into this category. The approach: take a vision-language model and graft an action head on top.

Fan's critique is pointed. He argues these models are really "LVAs" - because the most parameters are dedicated to language. Language is the first-class citizen, followed by vision, then action. The result: VLAs are "great at encoding knowledge and nouns, but not so much at physics and verbs. It's kind of head heavy in the wrong places."

His example: the original VLA paper showed a robot moving a Coke can to a picture of Taylor Swift - impressive generalization to an unseen concept, but not the kind of pre-training ability robotics actually needs.

Video world models: the unlikely hero

The replacement for VLAs starts in an unlikely place - AI-generated video. Fan acknowledges the irony. Nobody takes AI video slop of cats playing banjo on security cameras seriously. But something important is happening under the hood.

Video models like VEO-3 are learning to simulate physics internally. They pick up gravity, buoyancy, lighting, reflection, and refraction - all by themselves. None of it is coded in. As Fan puts it: "Physics emerge by predicting the next blob of pixels at scale."

Even visual planning emerges - VEO-3 can solve mazes by running simulation forward in pixel space. (Though Fan notes it sometimes cheats: "VEO-3 figures out that if you're not looking, geometry is optional." He calls this "physics slop.")

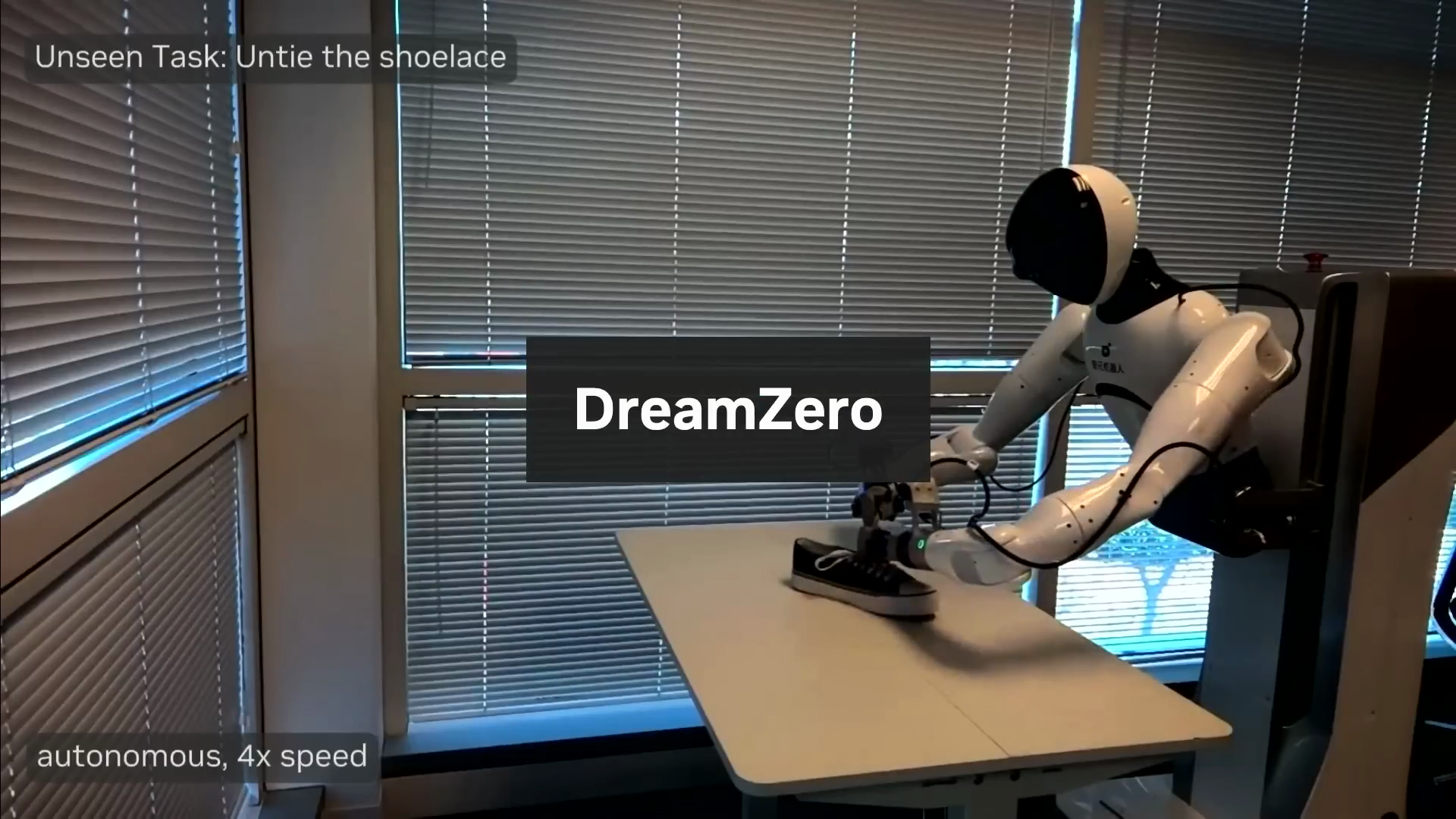

Dream Zero: from world models to robot actions

To make world models useful for robotics, Nvidia built Dream Zero - a policy model that "dreams a couple seconds into the future and acts accordingly."

The key insight: motor actions are high-dimensional continuous signals - which look a lot like pixels. So Dream Zero jointly decodes predicted future video frames and robot actions at the same time. The correlation is tight: "If the video prediction works, the action works. If the video hallucinates, the action fails."

The result is zero-shot generalization to tasks and verbs never seen in training. Fan compares it to GPT-2 - not 100% robust, but getting the shape of the motion correct in every case. It's a first step toward open-ended, open-vocabulary prompting for robotics.

Fan calls this new paradigm World Action Models (WAMs). And he's not subtle about the transition: "Let's all take a moment of silence for our dear friend VLAs. They've served us well. Rest in peace. Long live world action models."

The data revolution: teleop is dying

The model story is only half the picture. Fan spends equal time on data strategy - and his argument is just as aggressive.

Teleoperation has dominated robotics data collection for the past three years. VR headsets, optimized streaming latency, complex rigs that Fan says look like "medieval torture devices." The fundamental problem: teleop is upper bounded by 24 hours per robot per day. In practice, it's "more like 3 hours per robot per day, and only when the robot god is merciful."

Fan lays out three tiers of data collection, in order of scalability:

Tier 1: Data wearables (UMI)

Instead of teleoperating a robot, you wear the robot end-effector on your own hand. This is the Universal Manipulation Interface (UMI) approach. Fan calls UMI "perhaps one of the greatest papers ever written in robotics data" - it spawned two unicorn startups (Genesis and Sunday).

Nvidia extended this with DexUMI - an exoskeleton with a one-to-one mapping to five-finger dexterous robot hands. The results: fully autonomous robot policies trained on zero teleoperation data.

Tier 2: Egocentric video (Ego-Scale)

Data wearables are better than teleop but still cumbersome. Fan draws a comparison to Tesla's FSD - when you drive, you contribute to a massive data flywheel without even noticing. Robotics needs the same thing: data collection that fades into the background.

The answer is human egocentric video with hand tracking and language annotations. Nvidia's Ego-Scale system is pre-trained on 21,000 hours of in-the-wild egocentric human data with zero robot data. Then action fine-tuning uses only 50 hours of mocap data and 4 hours of teleop - less than 0.1% of the training mix.

The result: an end-to-end policy that maps camera pixels directly to 22-degree-of-freedom dexterous robot hands. It generalizes to tasks like sorting cards, manipulating syringes, and learning new shirt-folding strategies from a single demonstration.

Perhaps the biggest finding: they discovered a neural scaling law for dexterity - a clean log-linear relationship between pre-training hours and validation loss. Six years after the original neural scaling law for language models, robotics now has its own.

Fan's scalability chart puts it in perspective:

- Teleop: least scalable

- Data wearables: hundreds of thousands of hours

- Egocentric video: easily 10 million hours in the next year if the FSD-style flywheel spins up

His prediction: teleop will drop to negligible amounts within a year or two. The main diet for robotics will be egocentric videos.

Dream Dojo: neural simulators for RL

The final piece is scaling environments for reinforcement learning. Just like LLM labs spend significant budgets acquiring millions of coding environments for RL, robotics needs the same.

Nvidia's approach has two parts:

-

Real-to-sim-to-real: Take an iPhone photo, run it through a 3D world scan pipeline to extract interactive objects, then augment infinitely with "digital cousins" in simulation. Your iPhone becomes a pocket world scanner.

-

Dream Dojo: Video world models turned into full neural simulators. Dream Dojo takes continuous action signals as input and outputs RGB frames and sensor states in real time. "No physics equation, no graphics engine involved." Every pixel is generated by the model.

The new post-training paradigm: massively parallel RL running across a few real robot stations, graphics cores doing world scans, and heavy inference compute running world models. Or as Fan summarizes: "Compute now equals environment now equals data."

Three milestones to the finish line

Fan frames the remaining robotics roadmap as three "achievements" on a civilizational technology tree:

-

Physical Turing test - across a wide range of activities, you can't tell whether a human or robot did the task. Fan estimates two to three years away.

-

Physical API - robot fleets configured like software through APIs and command lines. This enables "lights-out factories - essentially printers of atoms" that take design files as input and output fully assembled products. Also: automated wet labs for chemistry, biology, and medicine.

-

Physical auto-research - robots designing, improving, and building the next iteration of themselves beyond what's humanly possible.

Is this science fiction? Fan's argument: it took 14 years to go from AlexNet (2012) barely recognizing cats vs. dogs to agentic auto-research in 2026. Add another 14 years and you land at 2040. And "technology does not advance linearly, it advances exponentially."

His closing bet: 95% certainty that we reach the end of the robotics technology tree by 2040.

Key takeaways

- VLAs are being replaced by World Action Models (WAMs) that use video world models as their pre-training backbone instead of language models. Vision and action become first-class citizens.

- Teleoperation is on its way out. The future of robotics data is egocentric human video - and Nvidia has already shown scaling laws that validate this approach.

- Neural simulators (Dream Dojo) change the RL equation. Compute equals environments equals data - no physics engine required.

- The LLM playbook transfers directly to robotics. Pre-train on world simulation, align through action fine-tuning, let RL carry the last mile.

The full talk is worth watching: Robotics' End Game: Nvidia's Jim Fan (20 min, Sequoia Capital).

Learn more

If you want to keep learning about physical AI, feel free to subscribe to our newsletter. If you're in the process of setting up petabyte-scale data pipelines for physical AI, you might want to check out MultiBase - the data warehouse for physical AI, with optimized data loading for the latest NVIDIA chips.