Prompting with DataFrames: Massively Parallel LLM Generation is Here

Discover how Daft's prompt function revolutionizes LLM workflows with massively parallel context engineering on DataFrames.

by Everett KlevenTLDR

We're introducing a new way to work with language models at scale. Daft's prompt function brings massively parallel text generation to DataFrames, making it trivial to run LLM operations over thousands or millions of rows with automatic batching and parallelization. Whether you're doing synthetic data generation, knowledge extraction, or batch tool-calling, you can now scale these workloads efficiently without wrestling with API rate limits or building complex batching logic.

prompt

import daft

from daft.functions import prompt

# read some sample data

df = daft.from_pydict({

"input": [

"What sound does a cat make?",

"What sound does a dog make?",

"What sound does a cow make?",

"What does the fox say?",

]

})

# generate responses using a chat model

df = df.with_column("response", prompt(daft.col("input"), model="gpt-5.1"))

df.show()With Daft's prompt function, prompting becomes a first-class DataFrame operation. Instead of building bespoke pipelines, you compose expressions: pass text, files, or images as columns; emit structured outputs as nested types; and let Daft take care of automatic batching, parallelization, retries, caching, and provider abstraction. Whether you're generating synthetic data, extracting knowledge from PDFs, calling tools at scale, or steering reasoning models, you can do it across thousands--or millions--of rows using the same mental model you already use for analytics.

The Problem: Prompt Engineering Doesn't Scale

If you've worked with LLMs in production, you've likely faced these challenges:

- Manual batching logic - Writing custom code to batch requests, handle rate limits, and retry failed calls

- Inefficient resource utilization - GPUs sitting idle between batches or during data preprocessing

- Context management complexity - Trying to pass images, PDFs, and structured data to models without a unified interface

- Data Accessibility - Curating context you want doesn't just live on your laptop, it also lives in cloud storage buckets, databases, and repositories.

- Poor cache utilization - Missing opportunities to reuse computation when prompts share common prefixes

These inconveniences translate directly to higher costs and slower iteration cycles. A data scientist shouldn't need to become a distributed systems engineer just to run a model over a dataset.

The Solution: Prompt as a DataFrame Operation

Daft's prompt function treats prompting as a first-class DataFrame operation. This seemingly simple abstraction unlocks powerful capabilities:

1. Automatic Parallelization

Just like how you don't think about parallelizing a SELECT statement in SQL, you shouldn't have to think about parallelizing LLM calls. Daft handles this automatically:

import daft

from daft.functions import prompt

# Read any data source

df = daft.read_parquet("s3://my-bucket/customer-reviews/*.parquet")

# Generate sentiment analysis at scale

df = df.with_column(

"sentiment",

prompt(

daft.col("review_text"),

system_message="Classify the sentiment as positive, negative, or neutral.",

model="gpt-5-mini"

)

)

# Daft automatically batches and parallelizes across your cluster

df.write_parquet("s3://my-bucket/analyzed-reviews/")2. Native Multimodal Support

Daft is purpose-built for multimodal AI workloads. Pass text, images, and files as a list of expressions with no manual message building required:

import daft

from daft.functions import prompt, download

# Load images and text together

df = daft.from_glob_path("s3://my-bucket/product-images/*.jpg")

df = df.with_column("image", decode_image(daft.col("path").download()))

# Prompt with both text and images

df = df.with_column(

"description",

prompt(

messages=[

daft.lit("Describe this product image in detail:"),

daft.col("image")

],

model="gpt-5"

)

)This works seamlessly with PDFs, Markdown files, HTML, CSV, and any other file type:

import daft

from daft.functions import prompt, file

df = daft.from_glob_path("hf://datasets/Eventual-Inc/sample-files/papers/*.pdf")

df = df.with_column("file", file(daft.col("path")))

df = df.with_column(

"summary",

prompt(

messages=[daft.lit("Summarize this research paper:"), daft.col("file")],

model="gpt-5-nano"

)

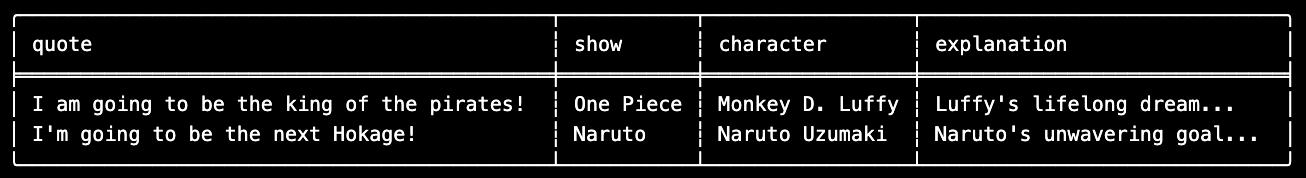

)3. Structured Outputs with Native Struct Support

Daft natively converts Pydantic models to DataFrame columns with nested struct types. This makes structured generation feel natural in a vectorized context:

import daft

from daft import prompt, unnest

from pydantic import BaseModel, Field

class Anime(BaseModel):

show: str = Field(description="The name of the anime show")

character: str = Field(description="The name of the character")

explanation: str = Field(description="Why the character says the quote")

df = daft.from_pydict({

"quote": [

"I am going to be the king of the pirates!",

"I'm going to be the next Hokage!",

]

})

df = df.with_column(

"classification",

prompt(

daft.col("quote"),

system_message="Classify the anime based on the quote.",

return_format=Anime,

model="gpt-5-nano",

)

).select("quote", unnest(daft.col("classification")))

df.show(format="fancy", max_width=80)

4. Flexible Template Composition

Build dynamic prompts using Daft's format function or user-defined functions:

from daft.functions import prompt, format

def answer_in_language(language: str, column_name: str) -> daft.Expression:

return format(

"Answer the following question in {}: {}",

daft.lit(language),

daft.col(column_name)

)

df = daft.from_pydict({

"question": [

"What is the capital of France?",

"Who invented the telephone?",

]

})

df = df.with_column(

"spanish_answer",

prompt(

answer_in_language("Spanish", "question"),

model="gpt-5"

)

)Or use row-wise @daft.func() user-defined functions for more complex logic:

@daft.func

def build_prompt(context: str, question: str, max_words: int) -> str:

return f"""

Context: {context}

Question: {question}

Answer in at most {max_words} words.

"""

df = df.with_column(

"answer",

prompt(

build_prompt(daft.col("context"), daft.col("question"), daft.lit(50)),

model="gpt-5"

)

)Swap Providers Without Changing Code

One of the most powerful aspects of Daft's AI functions is the provider abstraction. The same prompt call works across OpenAI, local models, vLLM servers, and more--just change the provider:

OpenAI (Default)

import daft

from daft.functions import prompt

# OpenAI is the default - just set your API key

df = df.with_column("result", prompt(daft.col("input"), model="gpt-5"))OpenAI-Compatible Providers (OpenRouter, etc.)

import os

import daft

from daft.functions import prompt

daft.set_provider(

"openai",

base_url="https://openrouter.ai/api/v1",

api_key=os.environ.get("OPENROUTER_API_KEY")

)

df = df.with_column(

"result",

prompt(daft.col("input"), model="nvidia/nemotron-nano-9b-v2:free")

)Local Models with LM Studio

df = df.with_column(

"result",

prompt(

daft.col("input"),

model="google/gemma-3-4b",

provider="lm_studio"

)

)vLLM Online Serving

daft.set_provider(

"openai",

api_key="none",

base_url="http://localhost:8000/v1"

)

df = df.with_column(

"result",

prompt(daft.col("input"), model="google/gemma-3-4b-it")

)vLLM Offline Serving w/ Prefix Caching (Beta)

For batch inference workloads, our new vLLM Prefix Caching provider can cut inference time in half:

df = df.with_column(

"result",

prompt(

daft.col("input"),

provider="vllm-prefix-caching",

model="Qwen/Qwen-8B"

)

)This provider implements Dynamic Prefix Bucketing and Streaming-Based Continuous Batching to maximize GPU utilization and cache hit rates.

Advanced Features

Tool Calling at Scale

Use OpenAI's built-in tools like web search, or define custom functions for agentic workflows:

import daft

from daft.functions import prompt

df = daft.from_pydict({

"query": ["Buy one get one free burritos in SF right now."]

})

df = df.with_column(

"search_results",

prompt(

daft.col("query"),

model="gpt-5",

tools=[{"type": "web_search"}]

)

)Reasoning Models

Control compute allocation with the reasoning parameter for GPT-5 and other reasoning-capable models:

df = df.with_column(

"deep_analysis",

prompt(

daft.col("complex_question"),

model="gpt-5.1",

reasoning={"effort": "high"},

)

)Working with Different OpenAI APIs

Daft supports both the new Responses API (default for GPT-5) and the Chat Completions API:

# Responses API (default for GPT-5)

df = df.with_column(

"result",

prompt(

daft.col("input"),

model="gpt-5",

max_output_tokens=200 # Note: max_output_tokens, not max_tokens

)

)

# Chat Completions API (for GPT-4.1 and earlier)

df = df.with_column(

"result",

prompt(

daft.col("input"),

model="gpt-4.1-2025-04-14",

max_tokens=200, # Note: max_tokens

temperature=0.7,

use_chat_completions=True

)

)Structured Outputs with vLLM Online Serving

vLLM's guided decoding for classification and regex-constrained generation works seamlessly:

# Classification with guided choice

df = df.with_column(

"sentiment",

prompt(

daft.col("review"),

model="google/gemma-3-4b-it",

extra_body={"structured_outputs": {"choice": ["positive", "negative"]}}

)

)

# Regex-constrained generation

df = df.with_column(

"email",

prompt(

format("Generate an email for {}", daft.col("name")),

model="google/gemma-3-4b-it",

extra_body={"structured_outputs": {"regex": r"\w+@\w+\.com\n"}}

)

)Conclusion

With massively parallel LLM generation now possible thanks to Daft's prompt function, you can scale LLM operations from a single row to millions without changing your code. Whether you're generating synthetic data, building document intelligence pipelines, or running batch inference, Daft provides the foundation for production-grade AI workloads.

The future of prompt engineering is declarative, scalable, and multimodal.

Try it out and let us know what you build!